Real-time image processing means something specific in enterprise operations: visual data analyzed at the point of capture, with structured outputs that reach operational workflows fast enough to affect the decision or action the image was captured for.

A manufacturing inspection that identifies a defective component after the production run ends is less valuable than one that flags it before the next production step. A document classification that routes a form to the wrong queue and requires manual correction is less valuable than one that classifies it correctly on intake. The analytical capability is only as operationally useful as how fast it produces results that the workflow can act on.

Claude Vision in real-time workflows is not about maximizing processing speed at the expense of analytical depth. It is about architecting visual analysis pipelines that produce accurate, structured outputs fast enough to influence the operational decisions they were designed to support.

Overview

Real-time image processing with Claude Vision requires architecture decisions that balance analytical quality with throughput requirements — optimizing API calls for the specific output each use case requires, building queuing infrastructure that prevents throughput bottlenecks, designing output delivery that meets the latency requirements of the downstream workflow, and implementing quality monitoring that detects degradation before it affects operational outcomes.

- Real-time visual processing requires latency architecture matched to the specific workflow’s time requirements

- Prompt optimization for visual tasks is the primary lever for reducing per-image processing latency

- Queuing infrastructure prevents throughput spikes from creating latency degradation

- Parallel processing of independent images reduces effective throughput time without requiring individual image processing to be faster

- Quality monitoring in real-time pipelines requires alerting that triggers before degradation reaches operational workflows

The 5 Why’s

- Why is latency architecture the primary design challenge for real-time visual AI, not processing speed? Claude Vision processes images at defined speeds. Latency from the enterprise workflow’s perspective is the time from image capture to actionable output delivery. That total latency includes image transmission, API call overhead, response processing, output validation, and delivery to the downstream system — not just the model inference time. Architecture decisions across each of those stages determine end-to-end latency.

- Why does prompt optimization for visual tasks directly reduce processing latency? Larger, more complex prompts take longer to process and produce larger, more complex outputs that take longer to validate and deliver. Prompts optimized for the specific output each visual task requires — requesting only the analysis and output format the downstream workflow needs — reduce processing time proportionally.

- Why is parallel processing more effective than throughput acceleration for high-volume visual pipelines? Individual image processing speed is largely fixed by model inference time. Processing multiple images in parallel multiplies effective throughput without requiring each individual image to be processed faster. Architecture that processes batches of independent images in parallel queues achieves higher aggregate throughput than sequential processing at any individual image speed.

- Why does quality monitoring matter specifically in real-time pipelines? Real-time pipelines that produce incorrect outputs feed those outputs directly into operational decisions before manual review has time to catch them. Quality degradation in a batch processing pipeline is discovered in the next review cycle. Quality degradation in a real-time pipeline affects the operational decisions made from the current cycle’s outputs before the degradation is detected. Real-time alerting on quality metrics is a requirement, not a monitoring enhancement.

- Why does output delivery architecture affect real-time operational value as much as analysis quality? Analysis that completes within the required latency window but is delivered in a format that requires transformation before the downstream system can consume it introduces additional latency at the delivery stage. Output delivery architecture — format, destination, delivery confirmation — determines whether the analysis quality reaches the operational workflow in time to affect it.

Real-Time Visual Processing Architecture

Latency Budget Allocation

Real-time visual processing begins with a latency budget — the total time from image capture to operational output delivery that the downstream workflow requires. That budget is allocated across each pipeline stage:

- Image capture and transmission to processing pipeline

- Queue wait time (should be near zero at operational throughput, spike buffer otherwise)

- API call and model inference

- Output validation

- Output delivery and downstream system update

Each stage has a latency budget. Architecture decisions for each stage are constrained by that budget. Stages that exceed their budget require optimization; stages with budget to spare provide buffer for variable workload conditions.

Prompt Optimization for Visual Speed

Prompts for real-time visual processing are optimized against two criteria: the minimum instruction required to produce the required output quality, and the minimum output format required for the downstream workflow to act on it.

- Classify-only tasks (document type, defect presence, condition status) use classification prompts that request a category label and confidence, not a narrative description

- Extract-only tasks (field values from forms, specific visual data elements) use extraction prompts that specify exactly which fields to extract in exactly the format the downstream system requires

- Assess-and-route tasks (inspection pass/fail with routing recommendation) use prompts that produce a structured assessment object — not a reasoning explanation unless the routing requires it

Parallel Processing Infrastructure

Independent images in a processing queue are processed in parallel worker threads:

- Worker count scales with available API capacity — parallel workers do not exceed the rate limit allocation

- Completed results are aggregated in delivery order, not processing order — downstream systems receive results in the sequence that matches the operational workflow requirements

- Failed processing attempts route to retry queues without blocking parallel processing of other queue items

Real-Time Use Cases by Operational Context

- Production line inspection — inspection images captured at defined production checkpoints are classified and assessed in near-real-time, with failed-inspection flags reaching the production supervisor before the affected component exits the inspection station

- Document intake routing — document images received at intake are classified and routed to the correct processing queue within the intake session, without requiring manual classification before routing begins

- Patient intake form processing — clinical intake form images are extracted in real-time during the intake session, with structured field data available for clinical review before the patient encounter begins

- Security documentation review — access event images are reviewed for documentation completeness in real-time, with incomplete documentation flagged to security staff while the access event is still current

A Simple Real-Time Visual Processing Readiness Check

Your real-time visual processing use case is architecturally ready if:

- A specific latency requirement has been defined for the downstream workflow — not “as fast as possible” but a specific time window the output must reach the workflow within

- Prompt design has been optimized for the specific output the downstream workflow requires — not general visual analysis

- Parallel processing infrastructure is in place or planned for the expected peak image volume

- Quality monitoring and alerting are designed for real-time detection — not periodic review

- Output delivery format and destination are defined in terms of downstream system requirements, not AI output format defaults

Final Takeaway

Real-time image processing with Claude Vision is not about achieving the fastest possible inference speed. It is about building end-to-end pipeline architecture that delivers accurate visual analysis outputs to operational workflows within the latency windows those workflows require — consistently, at scale, with quality monitoring that detects degradation before it reaches operational decisions.

The enterprise that achieves that has visual intelligence that changes operational outcomes at the point of action, not after the fact. That is what makes real-time visual processing operationally valuable rather than technically impressive.

Build Real-Time Visual Processing Pipelines With Mindcore Technologies

Mindcore Technologies works with enterprise operations and engineering teams to design and deploy real-time Claude Vision processing pipelines — latency architecture, prompt optimization, parallel processing infrastructure, quality monitoring, and output delivery designed to meet the operational latency requirements of specific workflows.

Talk to Mindcore Technologies About Real-Time Visual Processing Architecture →

Contact our team to define the latency requirements of your visual processing use cases and build the pipeline architecture that meets them.

Frequently Asked Questions

What is real-time image processing in enterprise operations?

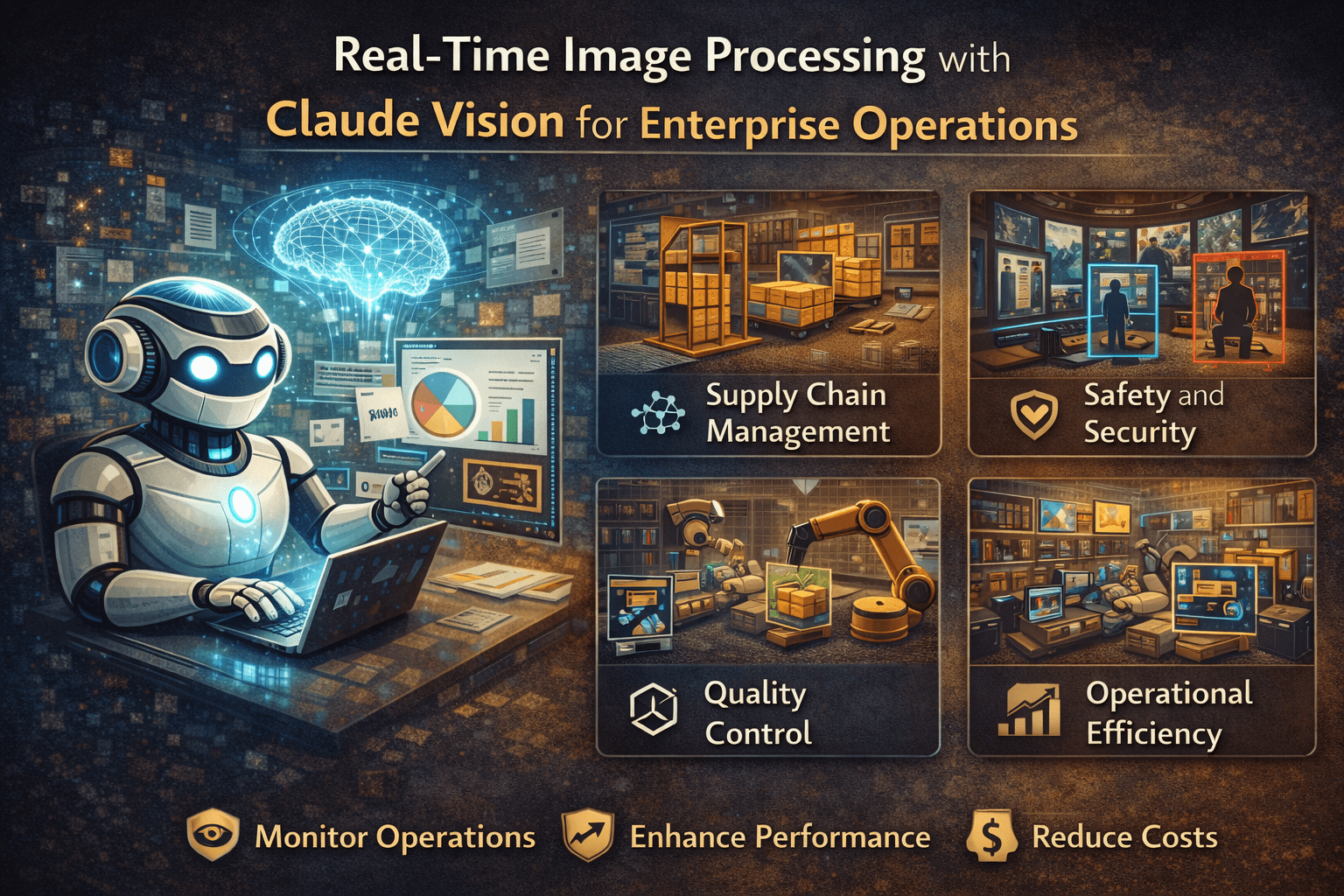

Real-time image processing uses AI systems to analyze visual data instantly from images, video feeds, documents, or cameras to improve operational visibility, automation, and decision-making across business environments.

How does Claude Vision support enterprise automation?

Claude Vision helps enterprises analyze visual information such as forms, screenshots, equipment images, workflows, and operational documents using AI-driven image recognition and contextual understanding.

What industries benefit from AI-powered image processing?

Industries such as healthcare, manufacturing, logistics, construction, retail, security, and operations management benefit from real-time image analysis for monitoring, automation, compliance, and workflow optimization.

Why is real-time image analysis important for operational efficiency?

Real-time image analysis reduces manual review processes, improves response times, increases visibility into operations, identifies anomalies faster, and helps organizations make quicker operational decisions.

What are common challenges with enterprise AI image processing?

Common challenges include integration complexity, data privacy concerns, infrastructure scalability, inconsistent image quality, compliance requirements, and ensuring AI-generated analysis aligns with operational accuracy standards.

AI Vision Systems and Enterprise Automation Expertise from Matt Rosenthal

Matt Rosenthal, CEO of Mindcore Technologies, has extensive experience helping organizations implement AI-driven operational automation, secure infrastructure strategies, and scalable enterprise technology solutions. His expertise in AI systems integration, operational visibility, cybersecurity governance, cloud architecture, workflow automation, and infrastructure scalability helps businesses improve efficiency while maintaining security and compliance readiness. Matt’s leadership focuses on building intelligent operational frameworks that strengthen automation capabilities, improve decision-making visibility, reduce operational friction, and support long-term digital transformation initiatives through strategic AI automation solutions.