An agent that cannot reach enterprise systems cannot automate enterprise workflows. That sounds obvious. What is less obvious is that the quality of system integration determines not just whether an agent works but whether the automation it produces is reliable, governable, and scalable across the full enterprise system landscape.

System integration for Claude Agents is not a connection problem. It is an architecture problem — one that requires decisions about connectivity protocols, authorization models, data handling across system boundaries, and the operational infrastructure that keeps integrations reliable as the agent deployment scales from a few systems to the full enterprise infrastructure.

Overview

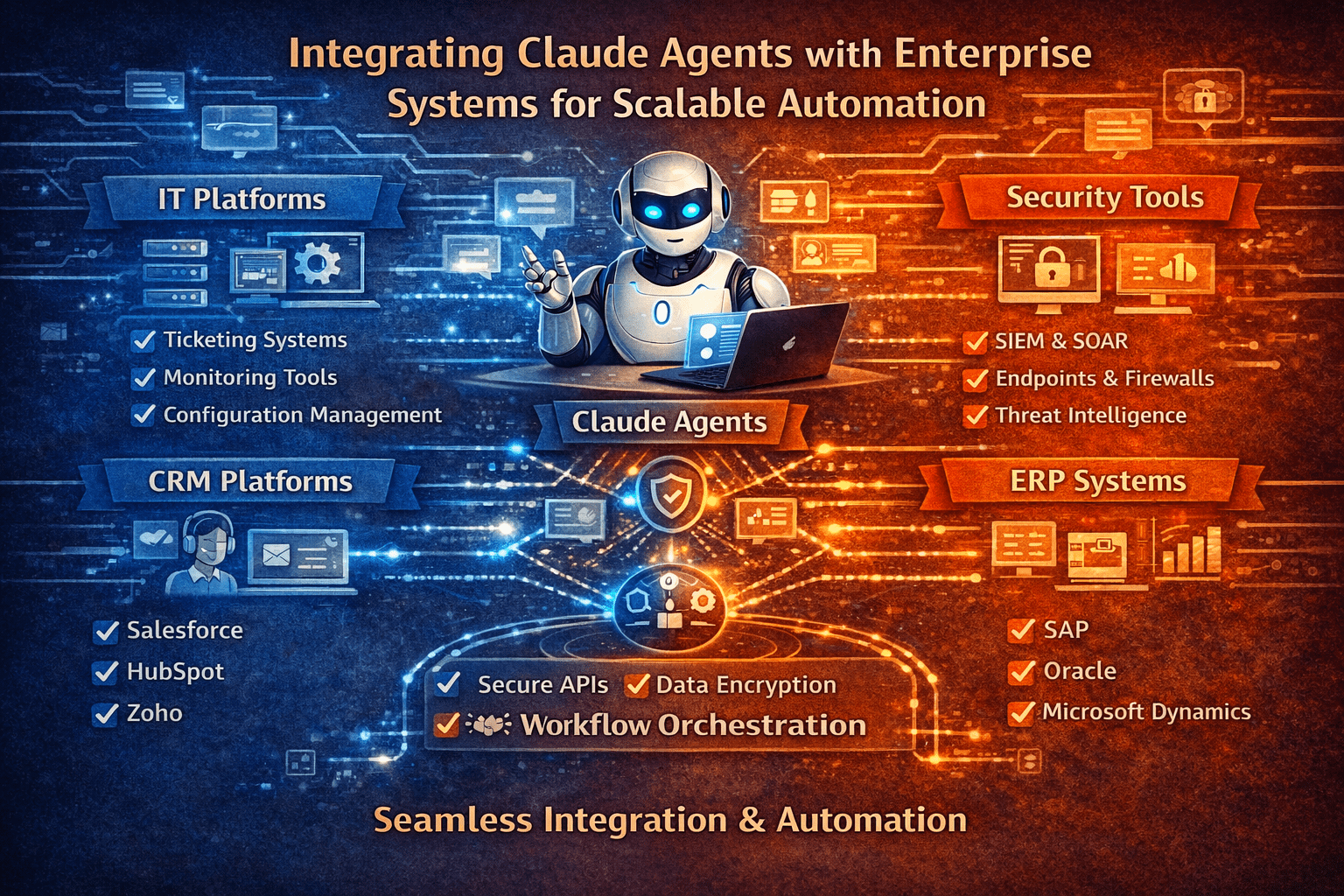

Claude Agent integration with enterprise systems requires four architectural components: connectivity infrastructure (how agents reach systems and execute actions), authorization design (what agents are permitted to access and do in each connected system), data boundary management (how sensitive data is handled as it moves across system connections during agent workflows), and operational infrastructure (how the integration layer is monitored, maintained, and scaled). Each component requires deliberate design. Organizations that design all four build agent automation that scales. Those that focus only on connectivity build agent automation that works at small scale and degrades as it expands.

- Connectivity infrastructure determines how agents reach systems — API connections, MCP protocol, or hybrid approaches

- Authorization design applies least-privilege principles to each system connection independently

- Data boundary management controls how sensitive data flows across system connections during multi-step workflows

- Operational infrastructure provides the monitoring, error handling, and maintenance capability that keeps integrations reliable at scale

- Scalability requires shared integration patterns that new system connections follow without reinventing each component

The 5 Why’s

- Why does integration architecture quality determine agent automation reliability as much as agent design quality? Agents are only as reliable as the systems they connect to and the quality of the connections. Integration failures — API timeouts, authentication errors, data format mismatches — break agent workflows regardless of how well the agent logic is designed. Robust integration architecture handles those failure modes gracefully and keeps agent workflows operational under conditions that brittle integrations break on.

- Why does authorization design need to be applied independently to each connected system rather than uniformly across all connections? Each enterprise system has different data sensitivity levels, different action consequence profiles, and different access control capabilities. Authorization design that applies the same access scope to all connected systems either over-provisions access for sensitive systems or under-provisions it for operational ones. System-specific authorization design applies the correct scope to each connection.

- Why does data boundary management matter specifically for multi-system agent workflows? An agent executing a multi-step workflow retrieves data from System A, uses it to determine what to do in System B, and records the result in System C. At each step, data from one system is present in the agent’s context when it interacts with another. Data boundary management controls whether sensitive data from System A can flow into System B’s context — and ensures that data governance policies governing each system apply to the agent’s interactions with it.

- Why does scalability require shared integration patterns rather than per-system custom integrations? Each system-specific custom integration is a unique implementation with unique maintenance requirements. At scale, those requirements accumulate into a maintenance burden that grows proportionally with the number of connected systems. Shared integration patterns that all connections follow reduce that burden — new connections implement the pattern rather than solving the same design problems from scratch.

- Why is operational infrastructure the component that determines whether agent integration remains reliable over time? Well-designed integrations degrade without operational infrastructure — system updates change APIs, credential rotations need to happen, connection health varies under load. Operational infrastructure that monitors integration health, manages credential lifecycles, and alerts on degradation before it affects agent workflows is what keeps integrations reliable as the deployment matures.

Connectivity Infrastructure Design

API-Based Connectivity

For enterprise systems that expose REST or GraphQL APIs:

- Service account credentials with minimum necessary API scope, stored in enterprise secrets management

- Request/response logging at the integration layer for auditability independent of application-level logging

- Retry logic with exponential backoff for transient failures; circuit breakers for prolonged degradation

- Version pinning for API versions to prevent unexpected breaking changes from system updates

MCP Protocol Connectivity

For systems that implement the Model Context Protocol:

- MCP server configuration that scopes available tools and data access to the agent’s defined workflow requirements

- Authentication integration with enterprise identity infrastructure

- Action authorization enforcement at the MCP server level — actions outside authorized scope return authorization errors, not silent execution failures

Hybrid Connectivity

Many enterprise system landscapes include both API-accessible and MCP-enabled systems. Integration architecture for hybrid environments:

- Consistent authentication patterns across connection types where enterprise identity infrastructure supports it

- Unified audit trail format across API and MCP connections for consistent auditability

- Common error handling patterns that produce consistent agent behavior regardless of connection type

Authorization Design by System Type

Each connected system requires an authorization design that reflects its sensitivity and the agent’s workflow requirements:

- Operational systems (CRM, ERP, ITSM) — read and defined write access for specific record types involved in defined workflows; no access to administrative functions or records outside workflow scope

- Financial systems — read access for workflow context; write access restricted to specific transaction types within defined value limits; all writes require Tier 2 or Tier 3 authorization

- Identity and access management systems — read access for workflow context only; any access provisioning or deprovisioning action requires Tier 3 human approval regardless of workflow confidence

- Communication systems (email, messaging) — send access restricted to defined templates and internal recipients for most workflows; external communication requires Tier 3 approval

Data Boundary Management Across Systems

- Sensitivity classification inheritance — data retrieved from a classified source system carries that classification through the agent workflow; it cannot flow to a destination system with lower access controls without explicit authorization

- Context scope limits — sensitive data retrieved for a specific workflow step is scoped to that step’s context; it does not persist into subsequent steps’ contexts unless the subsequent step explicitly requires it

- Cross-system data transfer logging — every transfer of data across system boundaries within an agent workflow is logged, enabling data flow audit for compliance and security review

Operational Infrastructure

- Integration health monitoring — connection health, latency, and error rates monitored for every system connection; alerts trigger at degradation thresholds, not at failure

- Credential lifecycle management — automated credential rotation with pre-rotation validation that new credentials work before old credentials are revoked

- Integration change management — system updates that may affect integration connections trigger integration review before they reach production

- Capacity management — request volume across all agent-to-system connections monitored against system capacity limits; load distribution adjustments made before capacity constraints cause agent workflow failures

Final Takeaway

Claude Agent integration with enterprise systems is the infrastructure layer that determines whether autonomous workflow execution is reliable, governable, and scalable — or whether it works in controlled environments and degrades when exposed to the variability, scale, and change rate of production enterprise operations.

The organizations that build integration architecture as deliberately as they build agent logic produce agent automation that scales across the full enterprise system landscape. Those that treat integration as a solved problem after the first connections work discover the gaps when the deployment grows and the infrastructure that was sufficient at small scale becomes the bottleneck at enterprise scale.

Build Scalable Agent System Integration With Mindcore Technologies

Mindcore Technologies works with enterprise IT and engineering teams to design and deploy Claude Agent system integration architecture — connectivity design, authorization models, data boundary management, and operational infrastructure that scales agent automation reliably across the full enterprise system landscape.

Talk to Mindcore Technologies About Scalable Claude Agent Integration →

Contact our team to assess your enterprise system landscape and design the integration architecture that supports scalable agent automation across it.