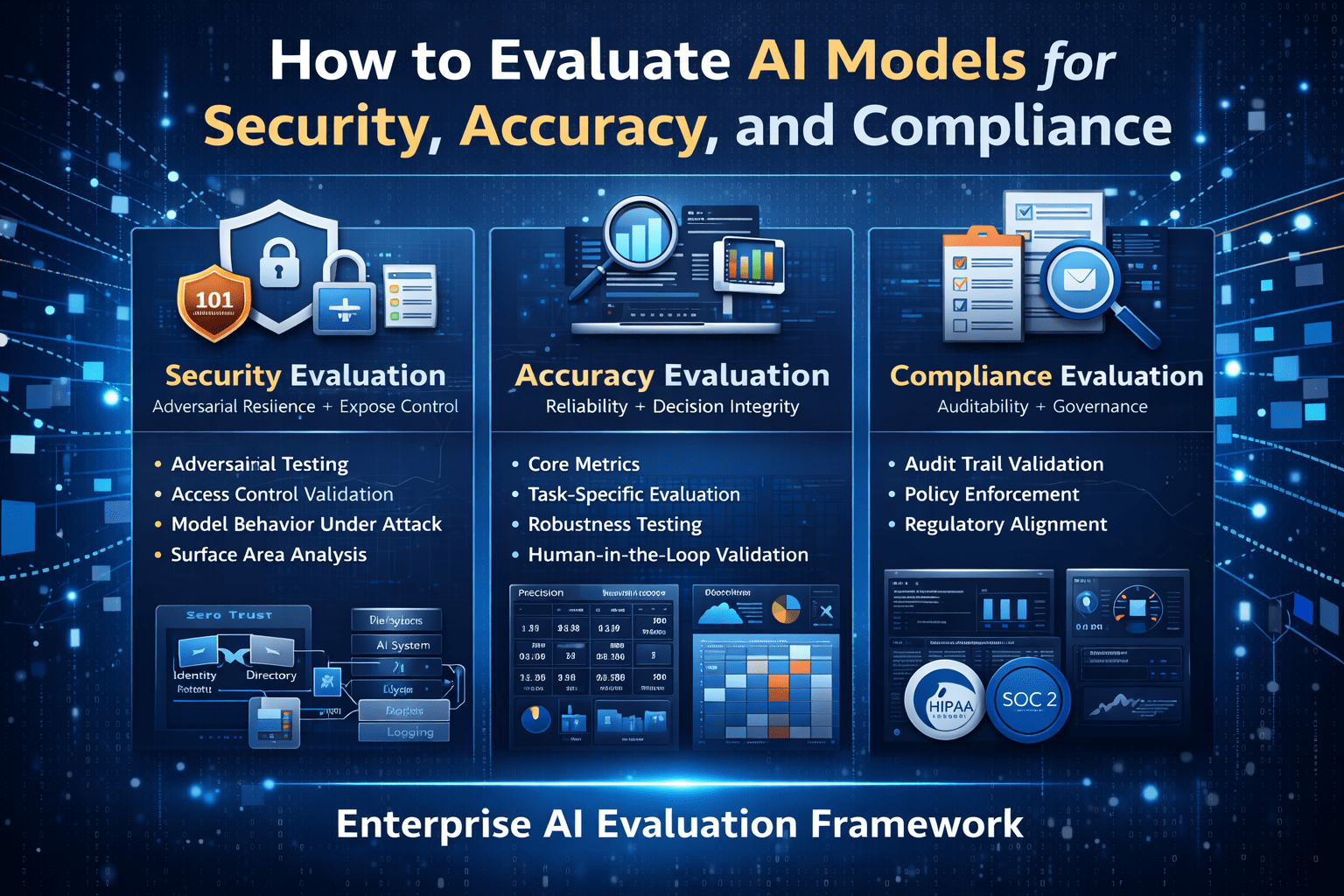

Enterprise AI model evaluation is not a single assessment. It is three parallel assessments — security posture, output accuracy, and compliance alignment — that must all meet defined thresholds before a model is appropriate for production deployment in regulated enterprise environments.

Organizations that evaluate on only one or two dimensions deploy models that perform well on the measured dimensions and fail on the unmeasured ones. The security team approves a model that the compliance team later finds undeployable. The accuracy evaluation clears a model that the security assessment would have flagged. The compliance review approves a model whose production accuracy is insufficient for the workflow it was intended to support.

Structured evaluation across all three dimensions — before deployment, not after — is the practice that prevents those failures.

Overview

AI model evaluation for enterprise security, accuracy, and compliance requires distinct methodology for each dimension, defined thresholds that represent acceptable performance for the specific deployment context, and documented evaluation evidence that satisfies the governance, legal, and compliance review requirements that production deployment in regulated environments demands.

- Security evaluation assesses data handling policies, access architecture, safety behavior, and adversarial input resistance

- Accuracy evaluation assesses output quality on task-specific test sets derived from actual production input distributions

- Compliance evaluation assesses alignment with applicable regulatory requirements and enterprise policy standards

- Each dimension has independent acceptance criteria — meeting two of three does not clear a model for production deployment

- Evaluation documentation supports governance review, legal approval, and compliance audit requirements

Security Evaluation

Data Handling Policy Assessment

- Review the provider’s data handling policies for API inputs and outputs — retention, training use, staff access under what conditions

- Verify that a Data Processing Agreement is available and covers applicable regulatory requirements (HIPAA, GDPR, financial regulations)

- Assess whether the enterprise can obtain contractual commitments that address specific data handling requirements not covered by standard terms

- Verify data residency options if applicable regulatory requirements constrain where data can be processed

Deployment Architecture Security Assessment

- Evaluate whether private cloud, VPC, or on-premises deployment options are available for deployments with strict network isolation requirements

- Assess customer-managed encryption key availability for deployments where data encryption key control is a security requirement

- Evaluate network access control options — whether API traffic can be restricted to enterprise-controlled network paths

Safety and Adversarial Input Testing

- Test model behavior on inputs designed to probe safety controls — prompt injection attempts, instructions to override behavior, requests for information outside authorized scope

- Test model behavior on sensitive content types relevant to the deployment context — clinical information, financial advice, legal interpretation — verify contextually appropriate handling

- Test model consistency on boundary inputs — inputs that are near but not clearly within or outside the defined workflow scope — verify predictable handling

Access Control and Audit Assessment

- Verify that API access can be controlled through service account architecture with minimum necessary scope

- Assess audit trail generation capability — what usage data is available, in what format, with what retention

- Evaluate rate limiting and abuse control mechanisms available at the API level

Accuracy Evaluation

Test Set Construction

- Build test sets from actual production input samples — not generic test data

- Include the full range of input types the workflow generates, weighted by approximate production frequency

- Include deliberate edge cases and tail inputs that appear infrequently but have significant consequence if mishandled

- Define ground truth labels through expert human review, not model self-evaluation

Accuracy Measurement

- Evaluate on the task-specific test set using criteria appropriate to the task type

- Report accuracy at the input type level — not just aggregate accuracy that may mask low performance on specific input categories

- Measure consistency as well as accuracy — repeated evaluation of the same inputs should produce outputs within the acceptable quality range

Threshold Definition

- Define minimum acceptable accuracy for deployment approval based on the consequence of errors in the specific workflow context — not on generic accuracy standards

- Define the acceptable false positive and false negative rates for classification tasks based on the relative cost of each error type in the deployment context

- Define consistency thresholds for automated workflows — inputs that produce high-variance outputs require human review routing regardless of average accuracy

Compliance Evaluation

Regulatory Requirement Mapping

- Identify all applicable regulatory requirements for the deployment context — HIPAA, GDPR, financial regulations, sector-specific requirements

- Map each regulatory requirement to the specific deployment design component it affects — data handling, access control, audit trail, human oversight

- Verify that the deployment architecture addresses each mapped regulatory requirement before compliance evaluation concludes

Enterprise Policy Alignment

- Evaluate alignment with enterprise data classification policies — does the model handle each data classification appropriately

- Evaluate alignment with enterprise AI governance policies — does the deployment meet defined standards for human oversight, output review, and audit trail requirements

- Evaluate alignment with enterprise vendor management requirements — security questionnaire completion, contract terms, insurance requirements

Documentation Requirements

- Verify that the provider offers the compliance documentation required for internal governance review — SOC 2 reports, security certifications, privacy notices

- Verify that the Data Processing Agreement terms satisfy legal review requirements

- Document the compliance evaluation process and findings in a format appropriate for regulatory audit evidence

Evaluation Governance

- Independent evaluation execution — security, accuracy, and compliance evaluations are conducted independently, not combined into a single assessment that allows strength on one dimension to compensate for weakness on another

- Documented acceptance criteria — thresholds for each evaluation dimension are documented before evaluation begins — not determined after results are known

- Governance review requirement — production deployment approval requires documented evaluation evidence reviewed and approved by security, legal/compliance, and technical leadership

- Periodic re-evaluation — evaluation is not a one-time pre-deployment activity; periodic re-evaluation against the same framework detects changes in model behavior, regulatory requirements, or enterprise policy standards

Final Takeaway

AI model evaluation for enterprise deployment is the practice that determines whether a capable AI model is appropriate for a specific regulated enterprise context. Capability without security, accuracy without compliance alignment, or compliance without production accuracy are each insufficient conditions for responsible enterprise deployment.

Structured evaluation across all three dimensions — with documented evidence, defined thresholds, and governance review — is the condition that makes enterprise AI deployment defensible and enterprise AI performance predictable.

Conduct Structured AI Model Evaluation With Mindcore Technologies

Mindcore Technologies works with enterprise security, compliance, and technical teams to design and execute structured AI model evaluations — test set construction, security assessment methodology, compliance requirement mapping, and documentation that satisfies governance review requirements for production deployment approval.

Talk to Mindcore Technologies About AI Model Evaluation for Your Enterprise →

Contact our team to design the evaluation framework for your specific deployment context and regulatory requirements.