Workflows that perform well at low volume frequently do not perform well at high volume. A workflow that processes 50 records per day with sub-second execution per record can fail, time out, or degrade other workflows when processing 50,000 records per day — not because the workflow logic is wrong, but because the architecture decisions that are irrelevant at low volume become critical constraints at high volume.

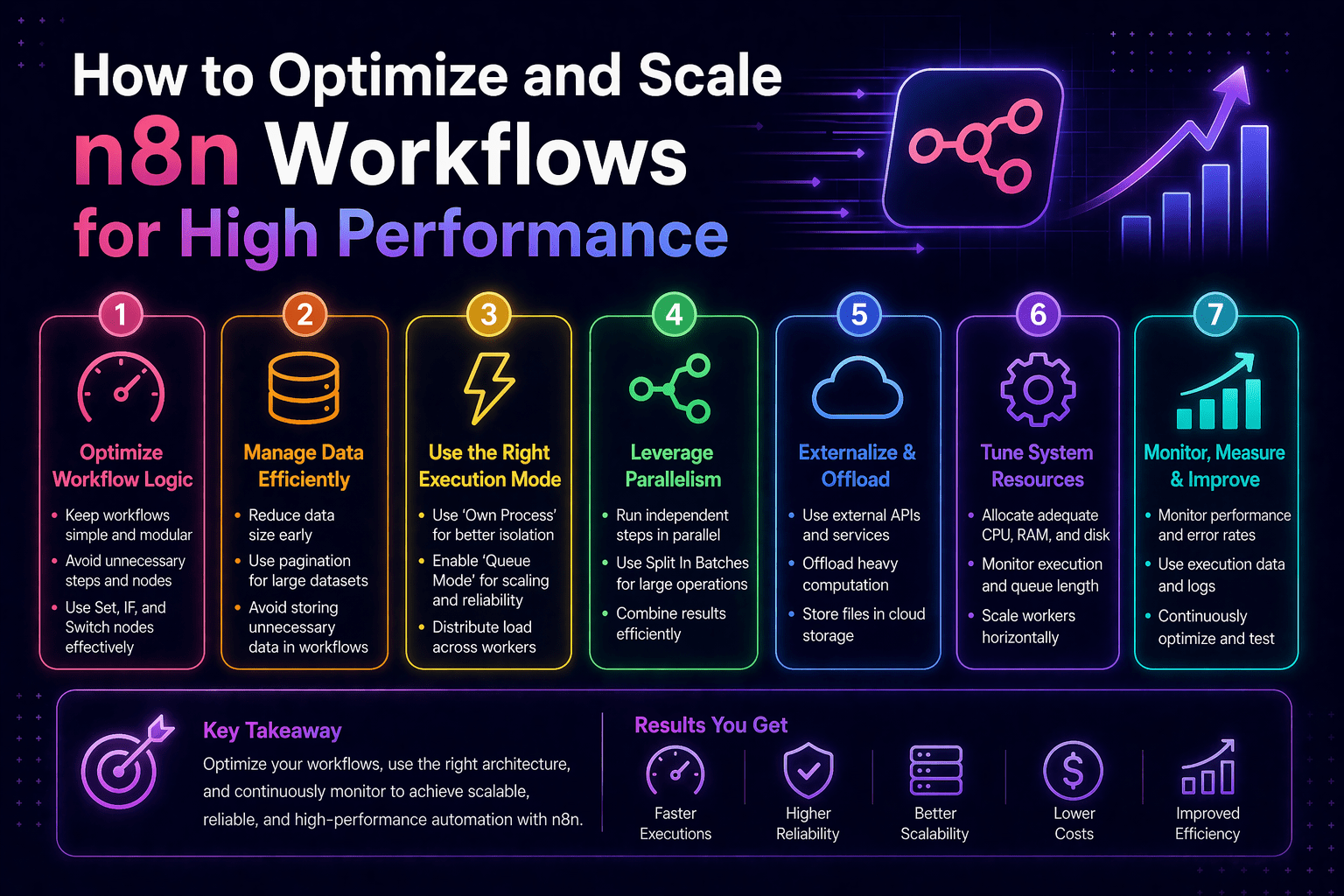

Performance optimization for n8n automation is an architecture discipline, not a tuning exercise. The decisions that determine whether workflows scale — queue mode deployment, concurrency configuration, database query design, API rate limit management, and execution history management — must be made intentionally. Default configurations that work in development become performance bottlenecks in production.

Overview

n8n workflow performance at scale is determined by five architectural variables: execution mode, concurrency configuration, database performance, API rate limit management, and execution history management. Each variable has default configurations that work at low volume but fail at high-volume production scale.

- Queue mode: required for high-concurrency, high-volume workflow execution

- Concurrency: controls how many workflows run simultaneously

- Database performance: determines how n8n handles execution data at scale

- Rate limit management: prevents API failures under load

- Execution history: controls database growth and long-term performance

This aligns with modern AI automation strategies and enterprise scalability practices.

The 5 Why’s

Why does queue mode specifically enable high-volume performance?

Regular mode processes everything in a single process, creating bottlenecks. Queue mode separates execution into worker processes, allowing horizontal scaling. More workers = more throughput.

Why does concurrency configuration matter for API-heavy workflows?

APIs have rate limits. Too many concurrent executions hit those limits, causing failures. Optimal concurrency matches API throughput, not system maximum capacity.

Why does database performance become a constraint at scale?

n8n stores execution history. As data grows, queries slow down without indexing. What works at 10,000 records fails at 10 million without optimization.

Why is execution history pruning critical?

Without pruning, execution logs grow indefinitely, consuming storage and degrading performance. Controlled retention maintains system health.

Why does API rate limit handling determine reliability?

At scale, hitting API limits is inevitable. Workflows must detect limits, pause, and retry. Without this, failures become systemic.

Queue Mode Deployment

Queue mode requires additional infrastructure:

- Redis for queue management

- n8n main instance for orchestration

- Worker instances for execution

- Horizontal scaling via additional workers

Configuration steps:

- Deploy Redis

- Set EXECUTIONS_MODE=queue

- Configure worker instances

- Validate execution distribution

Concurrency Optimization

Per-Worker Concurrency:

- Start with 5–10 executions per worker

- Adjust based on memory usage and API limits

- Monitor CPU and execution failures

Execution Prioritization:

- Separate critical vs background workflows

- Prioritize customer-facing processes

Database Performance Optimization

Index Management:

- Index workflowId

- Index startedAt

- Index status

Execution History Pruning:

- Enable pruning

- Limit retention to 7–14 days for non-critical workflows

- Control max execution count

API Rate Limit Management

Retry Pattern:

- Enable retry on fail

- Set retry count and delay

- Handle 429 responses intelligently

Volume Management:

- Use batch processing

- Control execution rates

- Use staging tables for large datasets

Workflow Performance Profiling

- Identify slow nodes

- Monitor execution duration trends

- Detect regressions early

Final Takeaway

n8n performance at scale is an architectural decision, not a reactive fix. Queue mode, concurrency tuning, database optimization, rate limit handling, and execution pruning determine whether your automation scales reliably or fails under pressure.

Optimize and Scale Your n8n Infrastructure With Mindcore Technologies

Mindcore Technologies helps organizations scale n8n automation — queue architecture, performance tuning, database optimization, and rate limit handling that ensure automation performs reliably at enterprise scale.

Schedule your free strategy call to assess your workflow performance and optimize your automation infrastructure.